自行准备一套k8s集群,如果不知道怎么搭建,可以参考一下我之前的博客

https://blog.csdn.net/qq_46902467/article/details/126660847

我的k8s集群地址是:

k8s-master1 10.0.0.10

k8s-node1 10.0.0.11

k8s-node2 10.0.0.12

一、安装nfs服务

#10.0.0.11作为nfs服务端,10.0.0.10和10.0.0.12作为nfs客户端

1.创建共享目录

mkdir /data/nfs -p

2.安装依赖包

yum install -y nfs-utils

3.修改/etc/exports文件,将需要共享的目录和客户添加进来

cat >> /etc/exports << EOF

/data/nfs/ *(rw,sync,no_subtree_check,no_root_squash # *代表所用IP都能访问

EOF

4.启动nfs服务,先为rpcbind和nfs做开机启动

systemctl start rpcbind

systemctl start nfs

#查看状态

systemctl status nfs

#设置开机自启

systemctl enable rpcbind

systemctl enable nfs

5.启动完成后,让配置生效

exportfs –r

#查看验证

exportfs

二、安装nfs客户端

1.安装nfs服务

yum install -y nfs-utils

2.启动rpcbind,设置开机自启、客户端不需要启动nfs

systemctl start rpcbind

systemctl enable rpcbind

3.检查nfs服务端是否开启了共享目录

showmount -e 10.0.0.11

4、测试客户端挂载

mount -t nfs 10.0.0.11:/data/nfs /mnt/

#查看挂载情况

df -Th

5.取消挂载

umount /mnt/

三、部署Prometheus

1.创建目录

mkdir /usr/local/src/monitoring/{prometheus,grafana,alertmanager,node-exporter} -p

2.创建命名空间

kubectl create ns monitoring

3.创建PV持久化存储卷 #偷个懒,我用的是静态PV

cat >>/usr/local/src/monitoring/prometheus/prometheus-pv.yaml << EOF

apiVersion: v1

kind: PersistentVolume

metadata:

name: prometheus-data-pv

spec:

capacity:

storage: 5Gi

accessModes:

- ReadWriteOnce

nfs:

path: /data/nfs/prometheus_data

server: 10.0.0.11

---

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: prometheus-data-pvc

namespace: monitoring

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 5Gi

4.创建RBAC

cat >> /usr/local/src/monitoring/prometheus/prometheus-rbac.yaml << EOF

apiVersion: v1

kind: ServiceAccount

metadata:

name: prometheus

namespace: monitoring

labels:

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRole

metadata:

name: prometheus

labels:

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

rules:

- apiGroups:

- ""

resources:

- nodes

- nodes/metrics

- services

- endpoints

- pods

verbs:

- get

- list

- watch

- apiGroups:

- ""

resources:

- configmaps

verbs:

- get

- nonResourceURLs:

- "/metrics"

verbs:

- get

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRoleBinding

metadata:

name: prometheus

labels:

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: prometheus

subjects:

- kind: ServiceAccount

name: prometheus

namespace: monitoring

EOF

5.创建Prometheus监控配置文件

cat >> /usr/local/src/monitoring/prometheus/prometheusconfig.yaml << EOF

apiVersion: v1

kind: ConfigMap

metadata:

name: prometheus-config

namespace: monitoring

data:

prometheus.yml: |

global:

scrape_interval: 15s

evaluation_interval: 15s

alerting:

alertmanagers:

- static_configs:

- targets: ["alertmanager.monitoring.svc:9093"]

rule_files:

- /etc/prometheus/rules/*.yml

scrape_configs:

- job_name: 'prometheus'

static_configs:

- targets: ['localhost:9090']

- job_name: kubeneters-coredns

honor_labels: false

kubernetes_sd_configs:

- role: endpoints

scrape_interval: 15s

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- action: keep

source_labels:

- __meta_kubernetes_service_label_k8s_app

regex: kube-dns

- action: keep

source_labels:

- __meta_kubernetes_endpoint_port_name

regex: metrics

- source_labels:

- __meta_kubernetes_endpoint_address_target_kind

- __meta_kubernetes_endpoint_address_target_name

separator: ;

regex: Node;(.*)

replacement: ${1}

target_label: node

- source_labels:

- __meta_kubernetes_endpoint_address_target_kind

- __meta_kubernetes_endpoint_address_target_name

separator: ;

regex: Pod;(.*)

replacement: ${1}

target_label: pod

- source_labels:

- __meta_kubernetes_namespace

target_label: namespace

- source_labels:

- __meta_kubernetes_service_name

target_label: service

- source_labels:

- __meta_kubernetes_pod_name

target_label: pod

- source_labels:

- __meta_kubernetes_service_name

target_label: job

replacement: ${1}

- source_labels:

- __meta_kubernetes_service_label_k8s_app

target_label: job

regex: (.+)

replacement: ${1}

- target_label: endpoint

replacement: metrics

- job_name: kubeneters-apiserver

honor_labels: false

kubernetes_sd_configs:

- role: endpoints

scrape_interval: 30s

scheme: https

tls_config:

insecure_skip_verify: false

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

server_name: kubernetes

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- action: keep

source_labels:

- __meta_kubernetes_service_label_component

regex: apiserver

- action: keep

source_labels:

- __meta_kubernetes_service_label_provider

regex: kubernetes

- action: keep

source_labels:

- __meta_kubernetes_endpoint_port_name

regex: https

- source_labels:

- __meta_kubernetes_endpoint_address_target_kind

- __meta_kubernetes_endpoint_address_target_name

separator: ;

regex: Node;(.*)

replacement: ${1}

target_label: node

- source_labels:

- __meta_kubernetes_endpoint_address_target_kind

- __meta_kubernetes_endpoint_address_target_name

separator: ;

regex: Pod;(.*)

replacement: ${1}

target_label: pod

- source_labels:

- __meta_kubernetes_namespace

target_label: namespace

- source_labels:

- __meta_kubernetes_service_name

target_label: service

- source_labels:

- __meta_kubernetes_pod_name

target_label: pod

- source_labels:

- __meta_kubernetes_service_name

target_label: job

replacement: ${1}

- source_labels:

- __meta_kubernetes_service_label_component

target_label: job

regex: (.+)

replacement: ${1}

- target_label: endpoint

replacement: https

metric_relabel_configs:

- source_labels:

- __name__

regex: etcd_(debugging|disk|request|server).*

action: drop

- source_labels:

- __name__

regex: apiserver_admission_controller_admission_latencies_seconds_.*

action: drop

- source_labels:

- __name__

regex: apiserver_admission_step_admission_latencies_seconds_.*

action: drop

- job_name: kubeneters-controller-manager

honor_labels: false

kubernetes_sd_configs:

- role: endpoints

scrape_interval: 30s

relabel_configs:

- action: keep

source_labels:

- __meta_kubernetes_service_label_k8s_app

regex: kube-controller-manager

- action: keep

source_labels:

- __meta_kubernetes_endpoint_port_name

regex: http-metrics

- source_labels:

- __meta_kubernetes_endpoint_address_target_kind

- __meta_kubernetes_endpoint_address_target_name

separator: ;

regex: Node;(.*)

replacement: ${1}

target_label: node

- source_labels:

- __meta_kubernetes_endpoint_address_target_kind

- __meta_kubernetes_endpoint_address_target_name

separator: ;

regex: Pod;(.*)

replacement: ${1}

target_label: pod

- source_labels:

- __meta_kubernetes_namespace

target_label: namespace

- source_labels:

- __meta_kubernetes_service_name

target_label: service

- source_labels:

- __meta_kubernetes_pod_name

target_label: pod

- source_labels:

- __meta_kubernetes_service_name

target_label: job

replacement: ${1}

- source_labels:

- __meta_kubernetes_service_label_k8s_app

target_label: job

regex: (.+)

replacement: ${1}

- target_label: endpoint

replacement: http-metrics

metric_relabel_configs:

- source_labels:

- __name__

regex: etcd_(debugging|disk|request|server).*

action: drop

- job_name: kubeneters-scheduler

honor_labels: false

kubernetes_sd_configs:

- role: endpoints

scrape_interval: 30s

relabel_configs:

- action: keep

source_labels:

- __meta_kubernetes_service_label_k8s_app

regex: kube-scheduler

- action: keep

source_labels:

- __meta_kubernetes_endpoint_port_name

regex: http-metrics

- source_labels:

- __meta_kubernetes_endpoint_address_target_kind

- __meta_kubernetes_endpoint_address_target_name

separator: ;

regex: Node;(.*)

replacement: ${1}

target_label: node

- source_labels:

- __meta_kubernetes_endpoint_address_target_kind

- __meta_kubernetes_endpoint_address_target_name

separator: ;

regex: Pod;(.*)

replacement: ${1}

target_label: pod

- source_labels:

- __meta_kubernetes_namespace

target_label: namespace

- source_labels:

- __meta_kubernetes_service_name

target_label: service

- source_labels:

- __meta_kubernetes_pod_name

target_label: pod

- source_labels:

- __meta_kubernetes_service_name

target_label: job

replacement: ${1}

- source_labels:

- __meta_kubernetes_service_label_k8s_app

target_label: job

regex: (.+)

replacement: ${1}

- target_label: endpoint

replacement: http-metrics

- job_name: m-alarm/kube-state-metrics/0

honor_labels: true

kubernetes_sd_configs:

- role: endpoints

scrape_interval: 30s

scrape_timeout: 30s

scheme: https

tls_config:

insecure_skip_verify: true

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- action: keep

source_labels:

- __meta_kubernetes_service_label_k8s_app

regex: kube-state-metrics

- action: keep

source_labels:

- __meta_kubernetes_endpoint_port_name

regex: https-main

- source_labels:

- __meta_kubernetes_endpoint_address_target_kind

- __meta_kubernetes_endpoint_address_target_name

separator: ;

regex: Node;(.*)

replacement: ${1}

target_label: node

- source_labels:

- __meta_kubernetes_endpoint_address_target_kind

- __meta_kubernetes_endpoint_address_target_name

separator: ;

regex: Pod;(.*)

replacement: ${1}

target_label: pod

- source_labels:

- __meta_kubernetes_namespace

target_label: namespace

- source_labels:

- __meta_kubernetes_service_name

target_label: service

- source_labels:

- __meta_kubernetes_pod_name

target_label: pod

- source_labels:

- __meta_kubernetes_service_name

target_label: job

replacement: ${1}

- source_labels:

- __meta_kubernetes_service_label_k8s_app

target_label: job

regex: (.+)

replacement: ${1}

- target_label: endpoint

replacement: https-main

- regex: (pod|service|endpoint|namespace)

action: labeldrop

- job_name: m-alarm/kube-state-metrics/1

honor_labels: false

kubernetes_sd_configs:

- role: endpoints

scrape_interval: 30s

scheme: https

tls_config:

insecure_skip_verify: true

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- action: keep

source_labels:

- __meta_kubernetes_service_label_k8s_app

regex: kube-state-metrics

- action: keep

source_labels:

- __meta_kubernetes_endpoint_port_name

regex: https-self

- source_labels:

- __meta_kubernetes_endpoint_address_target_kind

- __meta_kubernetes_endpoint_address_target_name

separator: ;

regex: Node;(.*)

replacement: ${1}

target_label: node

- source_labels:

- __meta_kubernetes_endpoint_address_target_kind

- __meta_kubernetes_endpoint_address_target_name

separator: ;

regex: Pod;(.*)

replacement: ${1}

target_label: pod

- source_labels:

- __meta_kubernetes_namespace

target_label: namespace

- source_labels:

- __meta_kubernetes_service_name

target_label: service

- source_labels:

- __meta_kubernetes_pod_name

target_label: pod

- source_labels:

- __meta_kubernetes_service_name

target_label: job

replacement: ${1}

- source_labels:

- __meta_kubernetes_service_label_k8s_app

target_label: job

regex: (.+)

replacement: ${1}

- target_label: endpoint

replacement: https-self

- job_name: kubeneters-kubelet

honor_labels: true

kubernetes_sd_configs:

- role: endpoints

scrape_interval: 30s

scheme: https

tls_config:

insecure_skip_verify: true

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- action: keep

source_labels:

- __meta_kubernetes_service_label_k8s_app

regex: kubelet

- action: keep

source_labels:

- __meta_kubernetes_endpoint_port_name

regex: https-metrics

- source_labels:

- __meta_kubernetes_endpoint_address_target_kind

- __meta_kubernetes_endpoint_address_target_name

separator: ;

regex: Node;(.*)

replacement: ${1}

target_label: node

- source_labels:

- __meta_kubernetes_endpoint_address_target_kind

- __meta_kubernetes_endpoint_address_target_name

separator: ;

regex: Pod;(.*)

replacement: ${1}

target_label: pod

- source_labels:

- __meta_kubernetes_namespace

target_label: namespace

- source_labels:

- __meta_kubernetes_service_name

target_label: service

- source_labels:

- __meta_kubernetes_pod_name

target_label: pod

- source_labels:

- __meta_kubernetes_service_name

target_label: job

replacement: ${1}

- source_labels:

- __meta_kubernetes_service_label_k8s_app

target_label: job

regex: (.+)

replacement: ${1}

- target_label: endpoint

replacement: https-metrics

- source_labels:

- __metrics_path__

target_label: metrics_path

- job_name: kubeneters-cadvisor

honor_labels: true

kubernetes_sd_configs:

- role: endpoints

scrape_interval: 30s

metrics_path: /metrics/cadvisor

scheme: https

tls_config:

insecure_skip_verify: true

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- action: keep

source_labels:

- __meta_kubernetes_service_label_k8s_app

regex: kubelet

- action: keep

source_labels:

- __meta_kubernetes_endpoint_port_name

regex: https-metrics

- source_labels:

- __meta_kubernetes_endpoint_address_target_kind

- __meta_kubernetes_endpoint_address_target_name

separator: ;

regex: Node;(.*)

replacement: ${1}

target_label: node

- source_labels:

- __meta_kubernetes_endpoint_address_target_kind

- __meta_kubernetes_endpoint_address_target_name

separator: ;

regex: Pod;(.*)

replacement: ${1}

target_label: pod

- source_labels:

- __meta_kubernetes_namespace

target_label: namespace

- source_labels:

- __meta_kubernetes_service_name

target_label: service

- source_labels:

- __meta_kubernetes_pod_name

target_label: pod

- source_labels:

- __meta_kubernetes_service_name

target_label: job

replacement: ${1}

- source_labels:

- __meta_kubernetes_service_label_k8s_app

target_label: job

regex: (.+)

replacement: ${1}

- target_label: endpoint

replacement: https-metrics

- source_labels:

- __metrics_path__

target_label: metrics_path

metric_relabel_configs:

- source_labels:

- __name__

regex: container_(network_tcp_usage_total|network_udp_usage_total|tasks_state|cpu_load_average_10s)

action: drop

- job_name: kubeneters-node

honor_labels: false

kubernetes_sd_configs:

- role: endpoints

scrape_interval: 30s

scheme: https

tls_config:

insecure_skip_verify: true

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- action: keep

source_labels:

- __meta_kubernetes_service_label_k8s_app

regex: node-exporter

- action: keep

source_labels:

- __meta_kubernetes_endpoint_port_name

regex: https

- source_labels:

- __meta_kubernetes_endpoint_address_target_kind

- __meta_kubernetes_endpoint_address_target_name

separator: ;

regex: Node;(.*)

replacement: ${1}

target_label: node

- source_labels:

- __meta_kubernetes_endpoint_address_target_kind

- __meta_kubernetes_endpoint_address_target_name

separator: ;

regex: Pod;(.*)

replacement: ${1}

target_label: pod

- source_labels:

- __meta_kubernetes_namespace

target_label: namespace

- source_labels:

- __meta_kubernetes_service_name

target_label: service

- source_labels:

- __meta_kubernetes_pod_name

target_label: pod

- source_labels:

- __meta_kubernetes_service_name

target_label: job

replacement: ${1}

- source_labels:

- __meta_kubernetes_service_label_k8s_app

target_label: job

regex: (.+)

replacement: ${1}

- target_label: endpoint

replacement: https

- source_labels:

- __meta_kubernetes_pod_node_name

target_label: instance

regex: (.*)

replacement: $1

action: replace

6.创建Prometheus告警配置文件

cat >> /usr/local/src/monitoring/prometheus/prometheus-rules.yaml << EOF

apiVersion: v1

kind: ConfigMap

metadata:

name: prometheus-rules

namespace: monitoring

data:

general.rules: |

groups:

- name: general.rules

rules:

- alert: InstanceDown

expr: up == 0

for: 1m

labels:

severity: error

annotations:

summary: "Instance {{ $labels.instance }} 停止工作"

description: "{{ $labels.instance }} job {{ $labels.job }} 已经停止5分钟以上."

node.rules: |

groups:

- name: node.rules

rules:

- alert: NodeFilesystemUsage

expr: 100 - (node_filesystem_free_bytes{fstype=~"ext4|xfs"} / node_filesystem_size_bytes{fstype=~"ext4|xfs"} * 100) > 80

for: 1m

labels:

severity: warning

annotations:

summary: "Instance {{ $labels.instance }} : {{ $labels.mountpoint }} 分区使用率过高"

description: "{{ $labels.instance }}: {{ $labels.mountpoint }} 分区使用大于80% (当前值: {{ $value }})"

- alert: NodeMemoryUsage

expr: 100 - (node_memory_MemFree_bytes+node_memory_Cached_bytes+node_memory_Buffers_bytes) / node_memory_MemTotal_bytes * 100 > 80

for: 1m

labels:

severity: warning

annotations:

summary: "Instance {{ $labels.instance }} 内存使用率过高"

description: "{{ $labels.instance }}内存使用大于80% (当前值: {{ $value }})"

- alert: NodeCPUUsage

expr: 100 - (avg(irate(node_cpu_seconds_total{mode="idle"}[5m])) by (instance) * 100) > 60

for: 1m

labels:

severity: warning

annotations:

summary: "Instance {{ $labels.instance }} CPU使用率过高"

description: "{{ $labels.instance }}CPU使用大于60% (当前值: {{ $value }})"

6.创建Prometheus

cat >> /usr/local/src/monitoring/prometheus/prometheus.yaml << EOF

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: prometheus

namespace: monitoring

labels:

app: prometheus

spec:

serviceName: "prometheus"

replicas: 1

selector:

matchLabels:

app: prometheus

template:

metadata:

labels:

app: prometheus

spec:

securityContext:

runAsUser: 0

serviceAccountName: prometheus

serviceAccount: prometheus

volumes:

- name: data

persistentVolumeClaim:

claimName: prometheus-pvc

- name: prometheus-config

configMap:

name: prometheus-config

- name: prometheus-rules

configMap:

name: prometheus-rules

- name: localtime

hostPath:

path: /etc/localtime

containers:

- name: prometheus-container

image: prom/prometheus:v2.32.1

imagePullPolicy: Always

command:

- "/app/prometheus/prometheus"

args:

- "--config.file=/etc/prometheus/prometheus.yml"

- "--storage.tsdb.path=/app/prometheus/data"

- "--web.console.libraries=/app/prometheus/console_libraries"

- "--web.console.templates=/app/prometheus/consoles"

- "--log.level=info"

- "--web.enable-admin-api"

ports:

- name: prometheus

containerPort: 9090

readinessProbe:

httpGet:

path: /-/ready

port: 9090

initialDelaySeconds: 30

timeoutSeconds: 30

livenessProbe:

httpGet:

path: /-/healthy

port: 9090

initialDelaySeconds: 30

timeoutSeconds: 30

resources:

requests:

cpu: 100m

memory: 30Mi

limits:

cpu: 500m

memory: 500Mi

volumeMounts:

- mountPath: "/app/prometheus/data"

name: data

- mountPath: "/etc/prometheus"

name: prometheus-config

- name: prometheus-rules

mountPath: /etc/prometheus/rules

- name: localtime

mountPath: /etc/localtime

EOF

7.创建Prometheus svc

cat >> /usr/local/src/monitoring/prometheus/prometheus-svc.yaml << EOF

kind: Service

apiVersion: v1

metadata:

name: prometheus

namespace: kube-system

labels:

kubernetes.io/name: "Prometheus"

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

spec:

ports:

- name: http

port: 9090

protocol: TCP

targetPort: 9090

selector:

k8s-app: prometheus

EOF

8.创建Prometheus-ingress

cat >> /usr/local/src/monitoring/prometheus/prometheus-ingress.yaml << EOF

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: prometheus

namespace: monitoring

spec:

rules:

- host: prometheus.com

http:

paths:

- path: /

backend:

serviceName: prometheus

servicePort: http

EOF

9.部署prometueus

kubectl apply -f /usr/local/src/monitoring/prometheus/

四、部署grafana

1.创建pv持久化存储卷

cat >>/usr/local/src/monitoring/grafana/grafana-pv.yaml << EOF

apiVersion: v1

kind: PersistentVolume

metadata:

name: grafana-data-pv

spec:

capacity:

storage: 5Gi

accessModes:

- ReadWriteOnce

nfs:

path: /data/nfs/grafana_data

server: 10.0.0.11

---

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: grafana-data-pvc

namespace: monitoring

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 5Gi

EOF

2.创建grafana服务

cat >>/usr/local/src/monitoring/grafana/grafana.yaml << EOF

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: grafana

namespace: monitoring

spec:

serviceName: "grafana"

replicas: 1

selector:

matchLabels:

app: grafana

template:

metadata:

labels:

app: grafana

spec:

containers:

- name: grafana

image: grafana/grafana:8.3.3

ports:

- containerPort: 3000

protocol: TCP

resources:

limits:

cpu: 100m

memory: 256Mi

requests:

cpu: 100m

memory: 256Mi

volumeMounts:

- name: grafana-data

mountPath: /var/lib/grafana

subPath: grafana

securityContext:

fsGroup: 472

runAsUser: 472

volumes:

- name: grafana-data

persistentVolumeClaim:

claimName: grafana-pvc

EOF

3.创建grafana-svc

cat >>/usr/local/src/monitoring/grafana/grafana-svc.yaml << EOF

apiVersion: v1

kind: Service

metadata:

name: grafana

namespace: monitoring

spec:

ports:

- name: http

port : 3000

targetPort: 3000

selector:

app: grafana

EOF

3.创建grafana-ingress

cat >>/usr/local/src/monitoring/grafana/grafana-ingress.yaml << EOF

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: grafana

namespace: monitoring

spec:

rules:

- host: grafana.com

http:

paths:

- path: /

backend:

serviceName: grafana

servicePort: http

EOF

4.部署grafana

kubecatl apply -f /usr/local/src/monitoring/grafana/

五、部署alertmanager

1.创建pv持久化存储卷

cat >>/usr/local/src/monitoring/alertmanager/alertmanager-pv.yaml << EOF

apiVersion: v1

kind: PersistentVolume

metadata:

name: alertmanager-data-pv

spec:

capacity:

storage: 5Gi

accessModes:

- ReadWriteOnce

nfs:

path: /data/nfs/alertmanager_data

server: 10.0.0.11

---

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: alertmanager-data-pvc

namespace: monitoring

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 5Gi

EOF

2.创建alertmangaer邮箱告警文件

cat >>/usr/local/src/monitoring/alertmanager/alertmanager-configmap.yaml << EOF

apiVersion: v1

kind: ConfigMap

metadata:

# 配置文件名称

name: alertmanager-config

namespace: monitoring

labels:

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: EnsureExists

data:

alertmanager.yml: |

global:

resolve_timeout: 5m

# 告警自定义邮件

smtp_smarthost: 'smtp.163.com:25'

smtp_from: 'w.jjwx@163.com'

smtp_auth_username: 'w.jjwx@163.com'

smtp_auth_password: '密码'

receivers:

- name: default-receiver

email_configs:

- to: "1965161128@qq.com"

route:

group_interval: 1m

group_wait: 10s

receiver: default-receiver

repeat_interval: 1m

EOF

3.创建alertmangaer

cat >>/usr/local/src/monitoring/alertmanager/alertmanager.yaml << EOF

apiVersion: apps/v1

kind: Deployment

metadata:

name: alertmanager

namespace: monitoring

labels:

k8s-app: alertmanager

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

version: v0.14.0

spec:

replicas: 1

selector:

matchLabels:

k8s-app: alertmanager

version: v0.14.0

template:

metadata:

labels:

k8s-app: alertmanager

version: v0.14.0

spec:

priorityClassName: system-cluster-critical

containers:

- name: prometheus-alertmanager

image: "prom/alertmanager:v0.14.0"

imagePullPolicy: "IfNotPresent"

args:

- --config.file=/etc/config/alertmanager.yml

- --storage.path=/data

- --web.external-url=/

ports:

- containerPort: 9093

readinessProbe:

httpGet:

path: /#/status

port: 9093

initialDelaySeconds: 30

timeoutSeconds: 30

volumeMounts:

- name: config-volume

mountPath: /etc/config

- name: storage-volume

mountPath: "/data"

subPath: ""

resources:

limits:

cpu: 10m

memory: 50Mi

requests:

cpu: 10m

memory: 50Mi

- name: prometheus-alertmanager-configmap-reload

image: "jimmidyson/configmap-reload:v0.1"

imagePullPolicy: "IfNotPresent"

args:

- --volume-dir=/etc/config

- --webhook-url=http://localhost:9093/-/reload

volumeMounts:

- name: config-volume

mountPath: /etc/config

readOnly: true

resources:

limits:

cpu: 10m

memory: 10Mi

requests:

cpu: 10m

memory: 10Mi

volumes:

- name: config-volume

configMap:

name: alertmanager-config

- name: storage-volume

persistentVolumeClaim:

claimName: alertmanager-pvc

EOF

4.创建alertmangaer-svc

cat >>/usr/local/src/monitoring/alertmanager/alertmanager-svc.yaml << EOF

apiVersion: v1

kind: Service

metadata:

name: alertmanager

namespace: monitoring

labels:

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

kubernetes.io/name: "Alertmanager"

spec:

ports:

- name: http

port: 9093

protocol: TCP

targetPort: 9093

selector:

k8s-app: alertmanager

4.创建alertmangaer-ingress

cat >> /usr/local/src/monitoring/alertmanager/alertmanager-ingress.yaml << EOF

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: alertmanager

namespace: monitoring

spec:

rules:

- host: alertmanager.com

http:

paths:

- path: /

backend:

serviceName:alertmanager

servicePort: http

EOF

5.部署alertmanager

kubectl apply -f /usr/local/src/monitoring/alertmanager/

六、部署node-exporter

cat >>/usr/local/src/monitoring/node-exporter/node-exporter-ds.yaml << EOF

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: node-exporter

namespace: monitoring

labels:

name: node-exporter

spec:

selector:

matchLabels:

name: node-exporter

template:

metadata:

labels:

name: node-exporter

spec:

tolerations:

- key: "node-role.kubernetes.io/master"

operator: "Exists"

effect: "NoSchedule"

hostPID: true

hostIPC: true

hostNetwork: true # hostNetwork hostIPC hostPID都为True时,表示这个Pod里的所有容器会直接使用宿主机的网络,直接与宿主机进行IPC(进程间通信通信,可以看到宿主机里正在运行的所有进程。

# 加入了hostNetwork:true会直接将我们的宿主机的9100端口映射出来,从而不需要创建service在我们的宿主机上就会有一个 9100的端口

containers:

- name: node-exporter

image: prom/node-exporter:v1.3.0

ports:

- containerPort: 9100

resources:

requests:

cpu: 0.15 # 这个容器运行至少需要0.15核cpu

securityContext:

privileged: true # 开启特权模式

args:

- --path.procfs=/host/proc # 配置挂载宿主机(node节点)的路径

- --path.sysfs=/host/sys # 配置挂载宿主机(node节点)的路径

- --path.rootfs=/host

volumeMounts: # 将主机/dev /proc /sys 这些目录挂在到容器中,这是因为我们采集的很多节点数据都是通过这些文件来获取系统信息的。

- name: dev

mountPath: /host/dev

readOnly: true

- name: proc

mountPath: /host/proc

readOnly: true

- name: sys

mountPath: /host/sys

readOnly: true

- name: rootfs

mountPath: /host

readOnly: true

volumes:

- name: proc

hostPath:

path: /proc

- name: dev

hostPath:

path: /dev

- name: sys

hostPath:

path: /sys

- name: rootfs

hostPath:

path: /

#部署

kubectl apply -f /usr/local/src/monitoring/node-exporter/node-exporter-ds.yaml

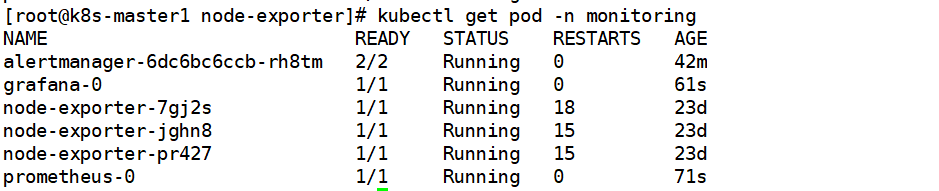

查看pod是否已经running

电脑设置域名解析

C:\Windows\System32\drivers\etc\hosts

10.0.0.11 prometheus.com grafana.comm gitlab.com alertmanager.com

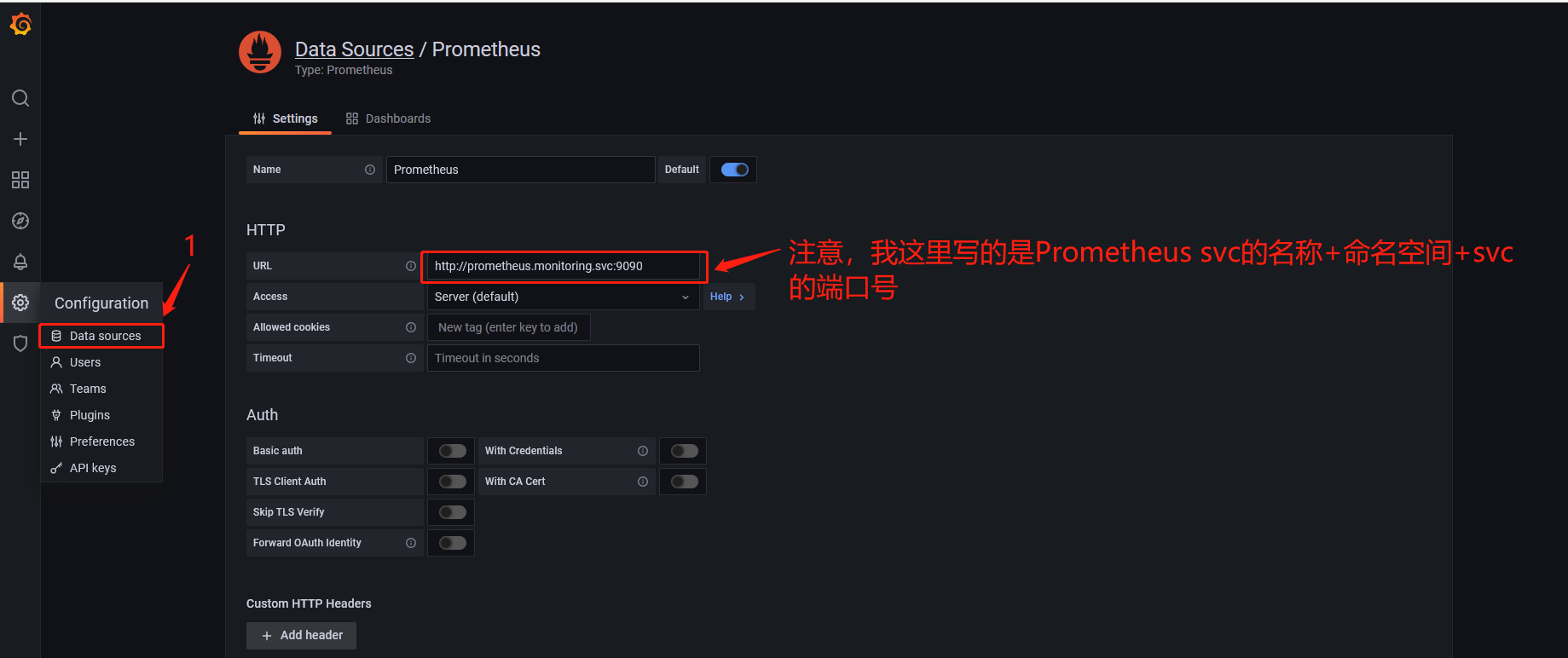

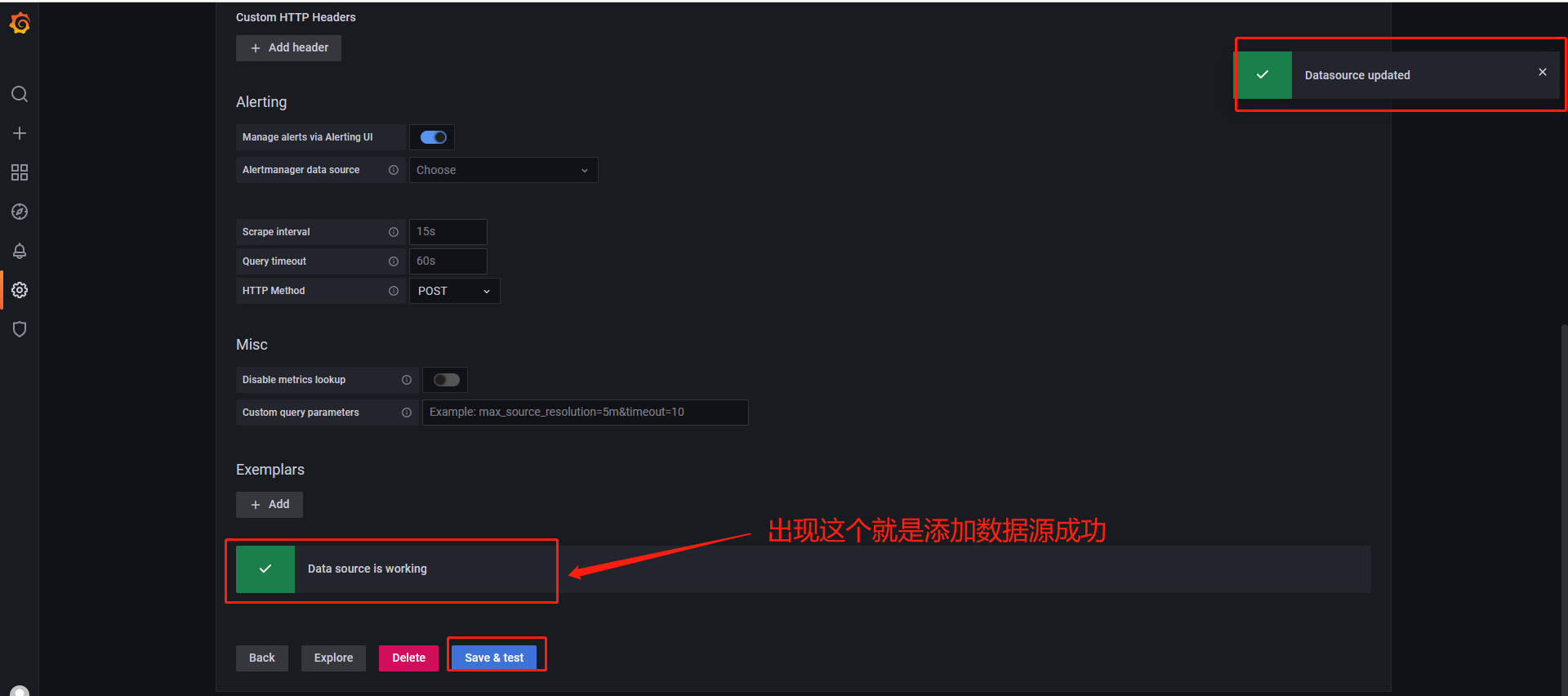

七、grafana添加Prometheus源

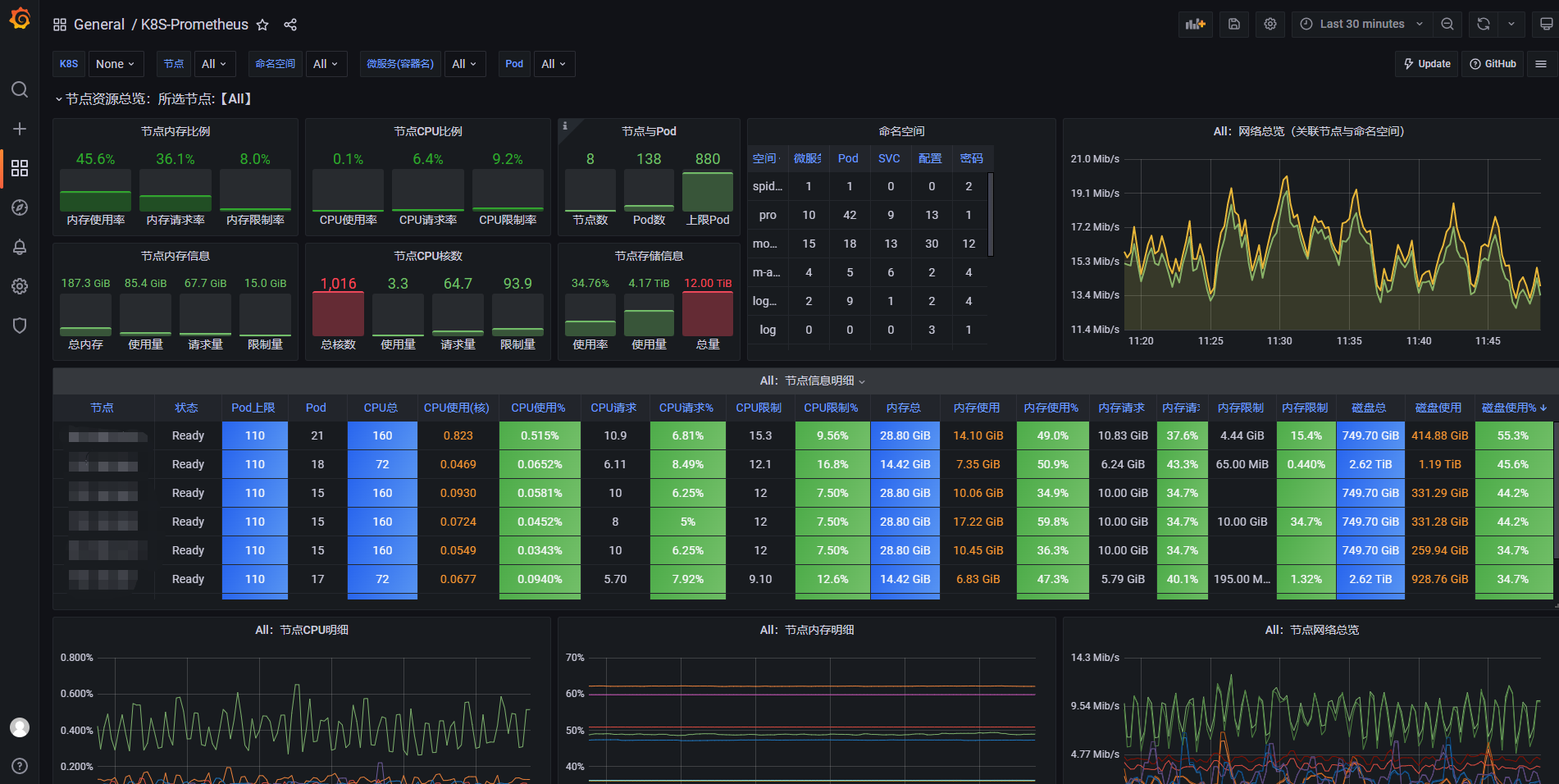

八、添加dashboard

k8s dashboard模板

ID:13105

k8s node-exporter模板

ID:8919

我是Google云的新手,我正在尝试对其进行首次部署。我的第一个部署是RubyonRails项目。我基本上是在关注thisguideinthegoogleclouddocumentation.唯一的区别是我使用的是我自己的项目,而不是他们提供的“helloworld”项目。这是我的app.yaml文件runtime:customvm:trueentrypoint:bundleexecrackup-p8080-Eproductionconfig.ruresources:cpu:0.5memory_gb:1.3disk_size_gb:10当我转到我的项目目录并运行gcloudprevie

我可以在Azure网站上部署RubyonRails吗? 最佳答案 还没有。目前仅支持.NET和PHP。 关于ruby-on-rails-RubyonRails可以部署在Azure网站上吗?,我们在StackOverflow上找到一个类似的问题: https://stackoverflow.com/questions/12964010/

这篇文章是继上一篇文章“Observability:从零开始创建Java微服务并监控它(一)”的续篇。在上一篇文章中,我们讲述了如何创建一个Javaweb应用,并使用Filebeat来收集应用所生成的日志。在今天的文章中,我来详述如何收集应用的指标,使用APM来监控应用并监督web服务的在线情况。源码可以在地址 https://github.com/liu-xiao-guo/java_observability 进行下载。摄入指标指标被视为可以随时更改的时间点值。当前请求的数量可以改变任何毫秒。你可能有1000个请求的峰值,然后一切都回到一个请求。这也意味着这些指标可能不准确,你还想提取最小/

前置步骤我们都操作完了,这篇开始介绍jenkins的集成。话不多说,看操作1、登录进入jenkins后会让你选择安装插件,选择第一个默认的就行。安装完成后设置账号密码,重新登录。2、配置JDK和Git都需要执行路径,所以需要先把执行路径找到,先进入服务器的docker容器,2.1JDK的路径root@69eef9ee86cf:/usr/bin#echo$JAVA_HOME/usr/local/openjdk-82.2Git的路径root@69eef9ee86cf:/#whichgit/usr/bin/git3、先配置JDK和Git。点击:ManageJenkins>>GlobalToolCon

深度学习部署:Windows安装pycocotools报错解决方法1.pycocotools库的简介2.pycocotools安装的坑3.解决办法更多Ai资讯:公主号AiCharm本系列是作者在跑一些深度学习实例时,遇到的各种各样的问题及解决办法,希望能够帮助到大家。ERROR:Commanderroredoutwithexitstatus1:'D:\Anaconda3\python.exe'-u-c'importsys,setuptools,tokenize;sys.argv[0]='"'"'C:\\Users\\46653\\AppData\\Local\\Temp\\pip-instal

Ocra无法处理需要“tk”的应用程序require'tk'puts'nope'用奥克拉http://github.com/larsch/ocra不起作用(如链接中的一个问题所述)问题:https://github.com/larsch/ocra/issues/29(Ocra是1.9的"new"rubyscript2exe,本质上它用于将rb脚本部署为可执行文件)唯一的问题似乎是缺少tcl的DLL文件我不认为这是一个问题据我所知,问题是缺少tk的DLL文件如果它们是已知的,则可以在执行ocra时将它们包括在内有没有办法知道tk工作所需的DLL依赖项? 最佳答

我有一个类unzipper.rb,它使用Rubyzip解压文件。在我的本地环境中,我可以成功解压缩文件,而无需使用require'zip'明确包含依赖项但是在Heroku上,我得到一个NameError(uninitializedconstantUnzipper::Zip)我只能通过使用明确的require来解决问题:为什么这在Heroku环境中是必需的,但在本地主机上却不是?我的印象是Rails自动需要所有gem。app/services/unzipper.rbrequire'zip'#OnlyrequiredforHeroku.Workslocallywithout!class

出于某种原因,heroku尝试要求dm-sqlite-adapter,即使它应该在这里使用Postgres。请注意,这发生在我打开任何URL时-而不是在gitpush本身期间。我构建了一个默认的Facebook应用程序。gem文件:source:gemcuttergem"foreman"gem"sinatra"gem"mogli"gem"json"gem"httparty"gem"thin"gem"data_mapper"gem"heroku"group:productiondogem"pg"gem"dm-postgres-adapter"endgroup:development,:t

如何使用Capistrano将Rails应用程序部署到无法访问外部网络或存储库的生产或暂存服务器?我已经设法完成部署的一半,并意识到Capistrano没有在我的本地机器上下载gitrepo,但它首先连接到远程服务器并尝试在那里下载Git存储库。我希望有一个类似Javaee的构建系统,其中创建可交付成果并将该可交付成果发送到服务器。就像您构建.ear文件并将其部署到您想要的任何服务器上一样。显然在RoR中,你被迫(据我所知)在该服务器上构建应用程序,在那里创建一个gem存储库,在那里克隆最新的分支等等。有什么方法可以将准备运行的包发送到远程服务器吗? 最佳答

集成背景我们当前集群使用的是ClouderaCDP,Flink版本为ClouderaVersion1.14,整体Flink安装目录以及配置文件结构与社区版本有较大出入。直接根据Streampark官方文档进行部署,将无法配置FlinkHome,以及后续整体Flink任务提交到集群中,因此需要进行针对化适配集成,在满足使用需求上,尽量提供完整的Streampark使用体验。集成步骤版本匹配问题解决首先解决无法识别Cloudera中的FlinkHome问题,根据报错主要明确到的事情是无法读取到Flink版本、lib下面的jar包名称无法匹配。修改对象:修改源码:(解决无法匹配clouderajar